Hi all,

I’m working on generating a hexahedral mesh in Cubit from voxelized data produced by a Finite Difference Time Domain (FDTD) simulation.

Each voxel is axis-aligned and defined by its center coordinates and dimensions. The goal is to create one HEX8 element per voxel, ideally resulting in a merged and compact mesh that can be exported to Exodus format.

I’ve written a minimal working example (MWE) that reads in the voxel data and:

- Creates one brick per voxel

- Moves each brick to the correct location

- Meshes each brick as a single hexahedral element

This works for small cases, but performance is a major concern. For example:

- Meshing 70,000 voxels takes approximately 30 minutes

- My production use cases involve between 10 million and 100 million voxels

To avoid further slowdown, I’m currently skipping the geometry merge step, though I would prefer to merge the result to reduce file size. I haven’t yet used Cubit’s parallel features, but this seems like a good use case for them. I also have significant computational resources to bring to bear for this task.

Any suggestions or ideas to improve performance are very welcome.

#Import packages

import numpy as np

import cubit

from timeit import time

#Set file names

voxel_data_file = 'voxel_data.txt.gz'

exo_file = 'voxel_mesh.exo'

#Initialize cubit

cubit.init(['cubit', '-nojournal', '-nographics'])

cubit.reset()

#Some default settings for improved performance

cubit.cmd('echo off')

cubit.cmd('undo off')

cubit.cmd('warning off')

cubit.cmd('info off')

cubit.cmd('set default autosize off')

#Load voxel centers

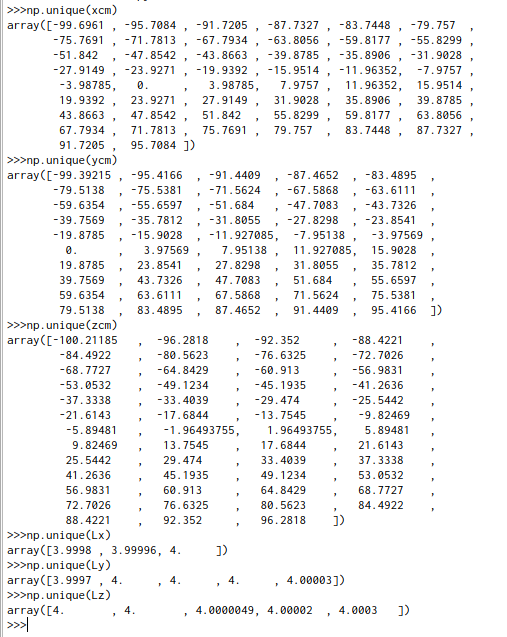

xcm, ycm, zcm, Lx, Ly, Lz = np.loadtxt(voxel_data_file)

nvoxel = int(xcm.shape[0])

vlength = len(str(nvoxel))

#Create bricks for each voxel

t0 = time.time()

for k in range(nvoxel):

cubit.cmd(f'brick x {Lx[k]} y {Ly[k]} z {Lz[k]}')

voxel_id = cubit.get_last_id('volume')

cubit.cmd(f'volume {voxel_id} move x {xcm[k]} y {ycm[k]} z {zcm[k]}')

cubit.cmd(f'block 1 vol {voxel_id}')

cubit.cmd(f'vol {voxel_id} interval 1')

cubit.cmd(f'vol {voxel_id} scheme map')

cubit.cmd(f'mesh vol {voxel_id}')

print(f'Voxel {k+1:0{vlength}d} of {nvoxel} created at time {time.time()-t0:.2f}', flush=True)

assert cubit.get_element_count() == nvoxel, "Something is off with the mesh."

#Add to block

cubit.cmd('block 1 element HEX8')

#Save

cubit.cmd(f'export genesis "{exo_file}" overwrite')

print(f'\nVoxel mesh created in {time.time()-t0:.2f} seconds.', flush=True)

voxel_data.txt.gz (49.9 KB)